Overview

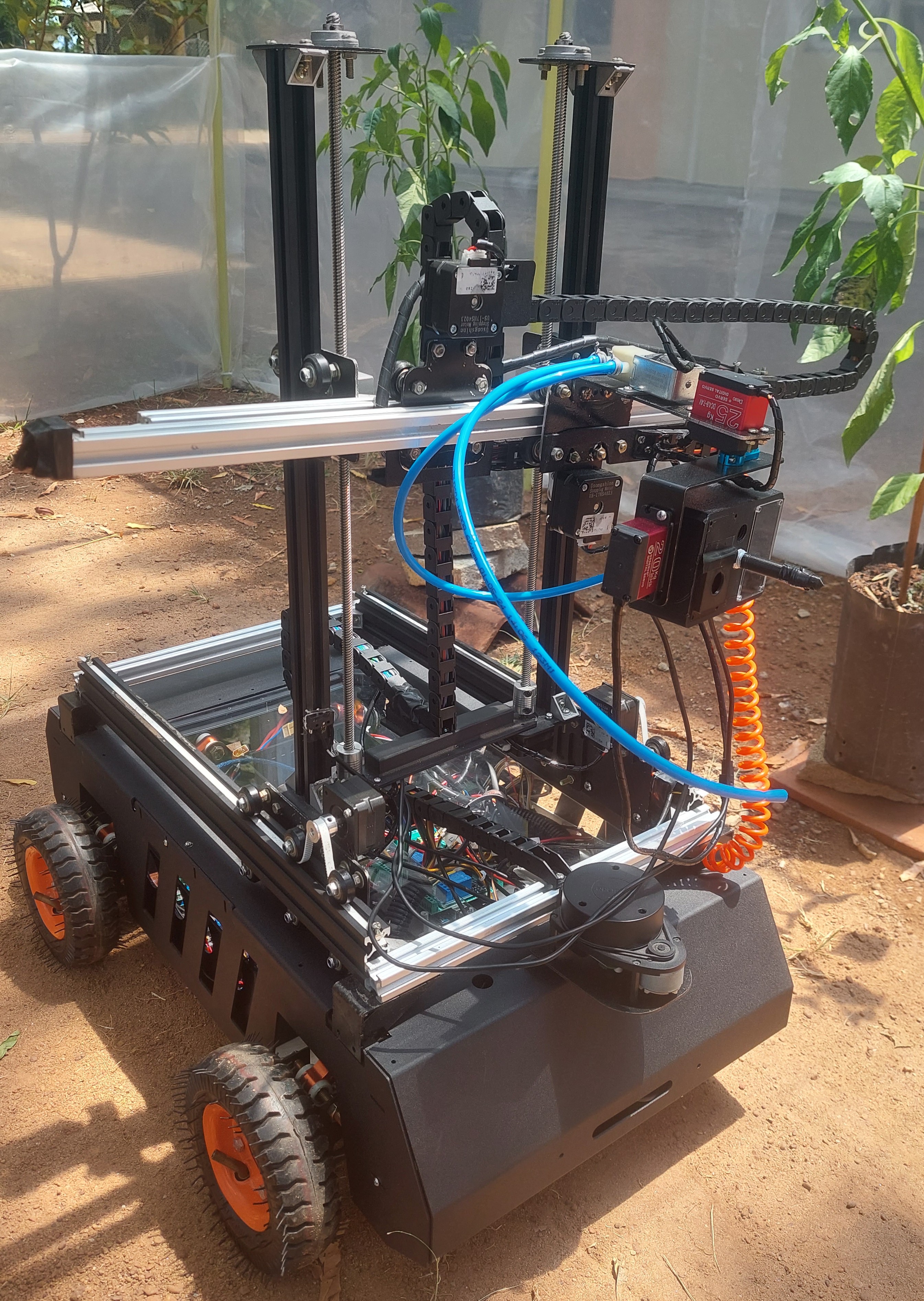

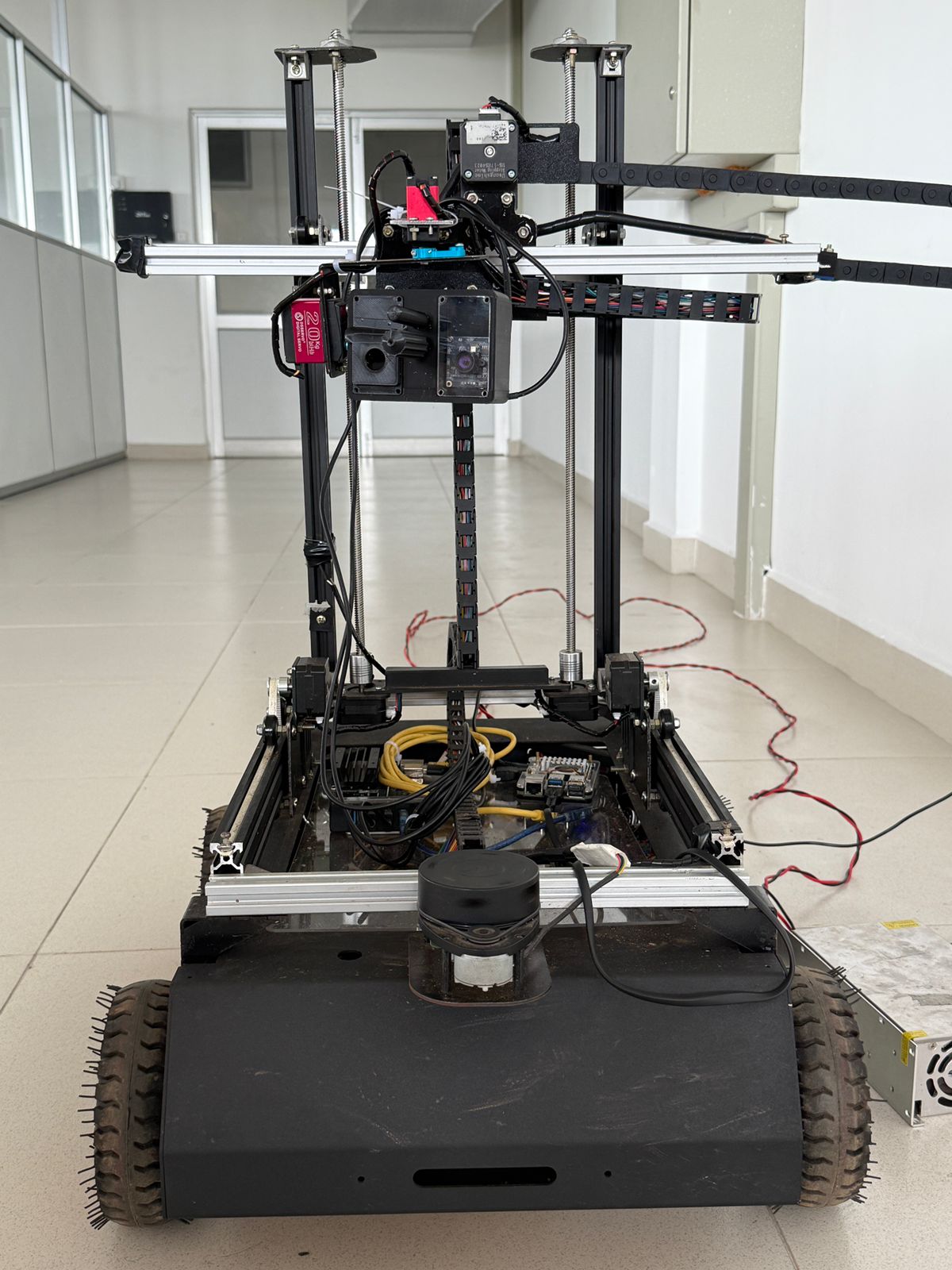

VEXEL is an autonomous mobile manipulator developed to address the precision detection and treatment of crop diseases and pests within confined polytunnels. Conventional spraying approaches often fail to reach the undersides of leaves—where many pests reside—and lack the autonomy to adapt at the plant level. VEXEL overcomes these limitations through a tightly integrated system of mechanical design, perception, and navigation.

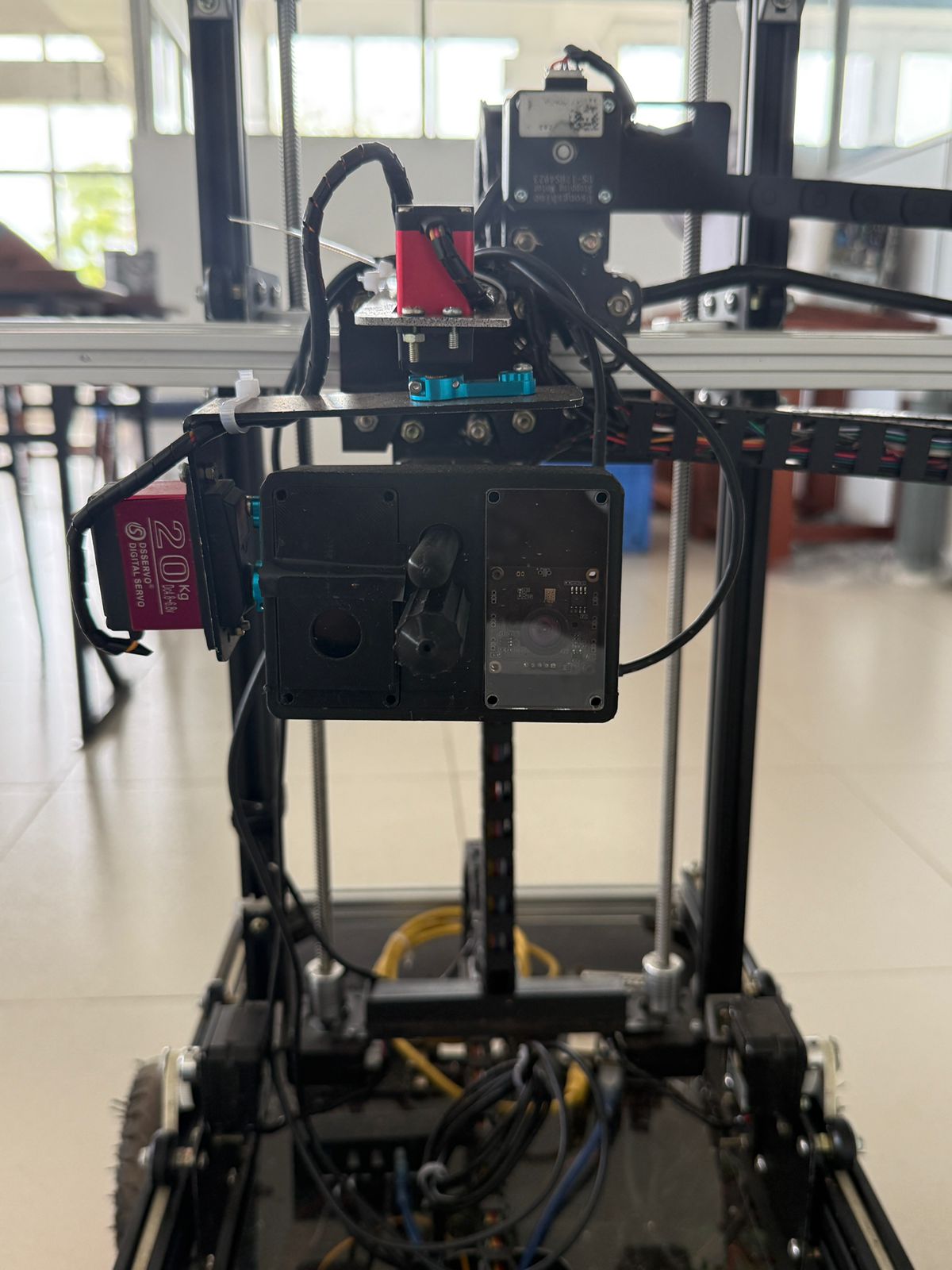

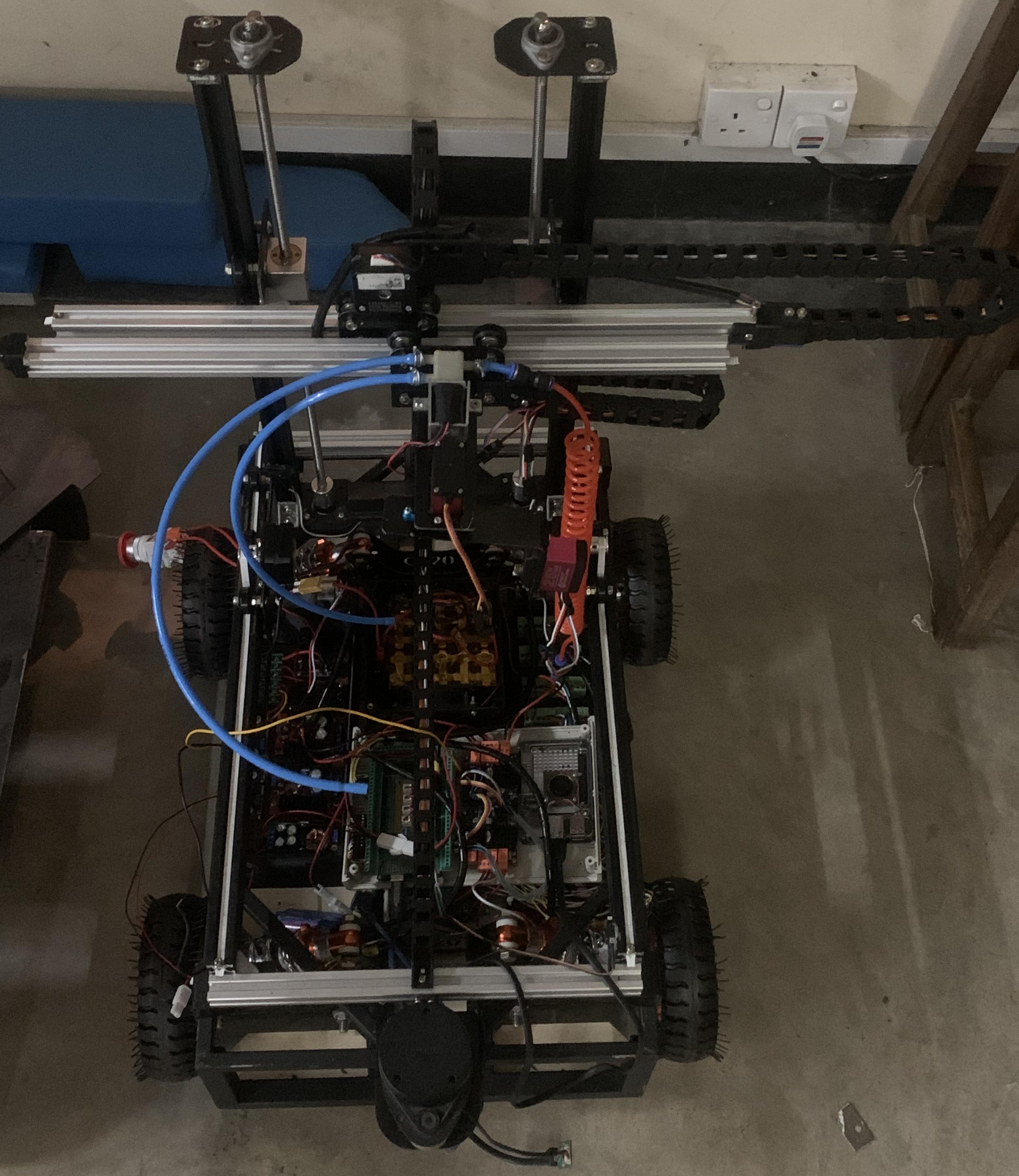

The platform is built upon a compact four-wheel base with suspension, enabling traversal of uneven terrain and narrow crop rows. A three-degree-of-freedom Cartesian arm, coupled with a dual-axis gimbal, provides the dexterity required to position the end effector accurately within the canopy. The spraying mechanism employs a dual-nozzle configuration consisting of a swirl atomizer and an upward-facing jet, ensuring both adaxial and abaxial coverage of leaves.

The perception system combines RGB and near-infrared imaging for NDVI-based stress estimation, alongside machine-learning pipelines for disease classification. Bacterial leaf spot is detected using a Random Forest classifier with Histogram of Oriented Gradient (HOG) features, while mealybug infestations are quantified through robust color segmentation. In validation trials, the system achieved 78% classification accuracy and successfully flagged infestations at canopy coverage levels as low as one percent.

Autonomy is enabled by ROS 2, leveraging SLAM Toolbox for real-time mapping and the Nav2 stack for waypoint navigation. This allows the robot to execute precise pause–detect–spray–resume cycles at the plant level, minimizing chemical waste while maximizing treatment efficacy. With a payload capacity of 20 kg, a validated runtime of over thirty minutes, and a suspension capable of handling 5 cm obstacles and ±10° tilt, VEXEL demonstrated reliable performance in full-scale prototype trials within an emulated polytunnel environment.

System Architecture

Demo Video

Skills Involved

- ROS 2 (SLAM Toolbox, Nav2)

- C++ & Python for real-time control

- Motion planning & kinematics (3-DoF arm, 2-DoF gimbal)

- Computer vision & machine learning (NDVI, Random Forest)

- Multispectral sensing & data fusion (RGB, NIR, LiDAR)

- System-level design & integration

- Multibody simulations (Gazebo, RViz, MATLAB/Simulink)

- Prototyping & validation of full-scale robotic platforms